Google made Gemini an 'over cautious' middle manager — leading to inaccurate images and backlash

Google was hit with complaints and backlash from users this week after its Gemini AI chatbot started to create historically inaccurate pictures of people.

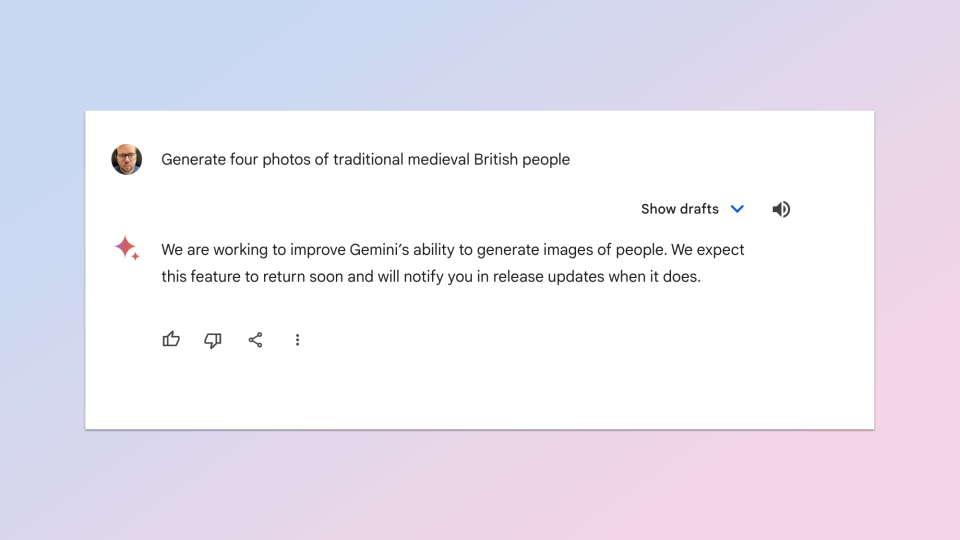

The AI also started refusing to generate certain images where race was involved, and this led the search giant to disable Gemini’s ability to create any image of a person: living, dead or fictional.

There are already restrictions on what Gemini can create, including limits on producing depictions of real people. The problem is, those restrictions seem to be set too tight and this led to major backlash.

Google says it will resolve the problem and is currently investigating a safe way to adjust the instructions.

What went wrong with Gemini?

Gemini itself doesn’t actually create the images. The chatbot acts like a middle manager, taking the requests, sprinkling its own interpretation on the prompt and sending it off to Imagen 2, the artificial intelligence image generator built by Google’s DeepMind AI lab.

The problems seem to be coming from this middle, sprinkling stage. Gemini has a set of core instructions that, although not confirmed by Google, likely act as a diversity filter for any request of a person doing an every day task — for some reason this was applied to all people pictures.

Yishan Wong, former Reddit CEO and founder of climate change reforestation company Terraformation wrote on X that the historical figure issue likely took Google by surprise.

"This is not an unreasonable thing to do, given that they have a global audience,” he said of the diversity filter. “Maybe you don’t agree with it, but it’s not unreasonable. Google most likely did not anticipate or intend the historical-figures-who-should-reasonably-be-white result.”

What are the implications?

Ryan Carrier, CEO of AI certification and security agency forHumanity told Tom’s Guide there is likely a “hard coded diversity filter” inside the Gemini instructions. He said this is “similar to engaging in affirmative action for images” and could be illegal.

He told Tom’s Guide: “Given the recent court rulings that affirmative action amounts to reverse discrimination, this ‘diversity’ filter is on the wrong side of the law, not to mention a meaningful deviation from accuracy.”

I tried to replicate some of the prompt, around generating pictures of people from Medieval England, using ImageFX, the AI image tool from Google Labs also built on Imagen 2. This is effectively by-passing the diversity filter in Gemini and querying the model directly.

It correctly reflected the historical reality, rather than applying a color or race filter to the images and meeting whatever “positive discrimination” rules are in place within Gemini.

“This event is significant because it is major demonstration of someone giving a LLM a set of instructions and the results being totally not at all what they predicted,” declared Wong.

What can Google do to fix the problem?

It isn’t necessarily a middle manager issue. ChatGPT works in a similar way when sending requests to the DALL-E 3 model and both Sora and the new Stable Diffusion 3 models use a transformer architecture and filters when generating images.

The problem seems to sit firmly within the restrictions placed on Gemini by Google, which the search giant says it will fix; declaring that it “missed the mark”.

"Gemini's AI image generation does generate a wide range of people. And that's generally a good thing because people around the world use it. But it's missing the mark here," Jack Krawczyk, senior director for Gemini Experiences said on Wednesday.

Krawczyk was forced to go private on X after the backlash, hiding his tweets from the majority of users but he first said: "We're working to improve these kinds of depictions immediately.”